Autonomous Drone Exploration from Natural Language

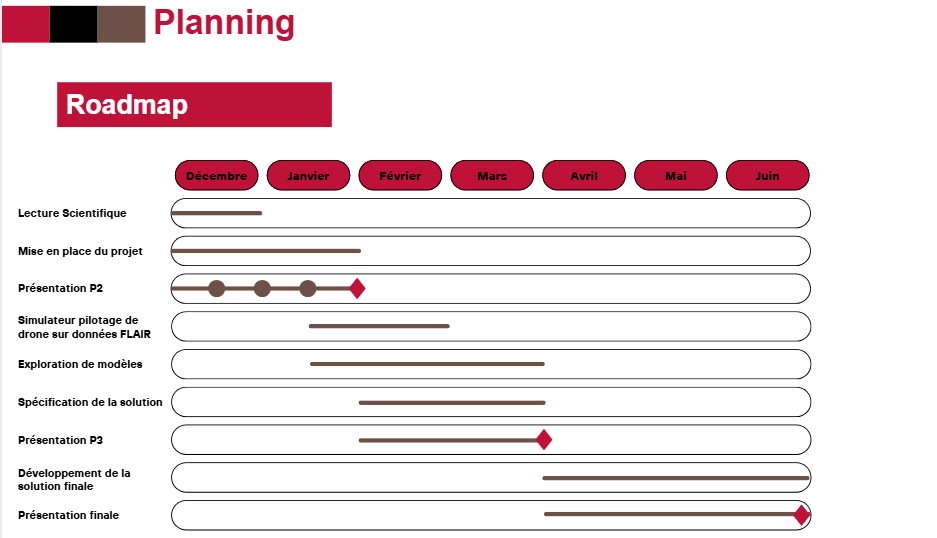

Telecom Paris final project (ongoing) exploring how vision-language models can interpret large aerial scenes from FLAIR-HUB and guide a drone in simulation toward mission-defined target areas.

Visit repositoryEvaluating VLMs on FLAIR-HUB aerial scenes

FLAIR-HUB is a large-scale multimodal Earth observation dataset released by IGN, combining very high resolution aerial imagery with aligned satellite, topographic, and historical data across a wide range of French landscapes. In this project, I use assembled aerial tiles from FLAIR-HUB as realistic large-scale scenes for vision-language model evaluation.

The first stage of the project focused on testing whether different VLMs can understand a complex aerial scene as a whole: describing its global layout, identifying major structures such as roads, rivers, built-up areas, or the stadium, and highlighting potentially relevant zones of interest. This part is exploratory and is still being extended with additional prompts and model comparisons.

From scene understanding to action

After the fixed-image evaluation, the next step was to move toward a decision loop. The project now explores how a VLM can use aerial observations not only to describe a scene, but also to guide actions inside a drone simulator.

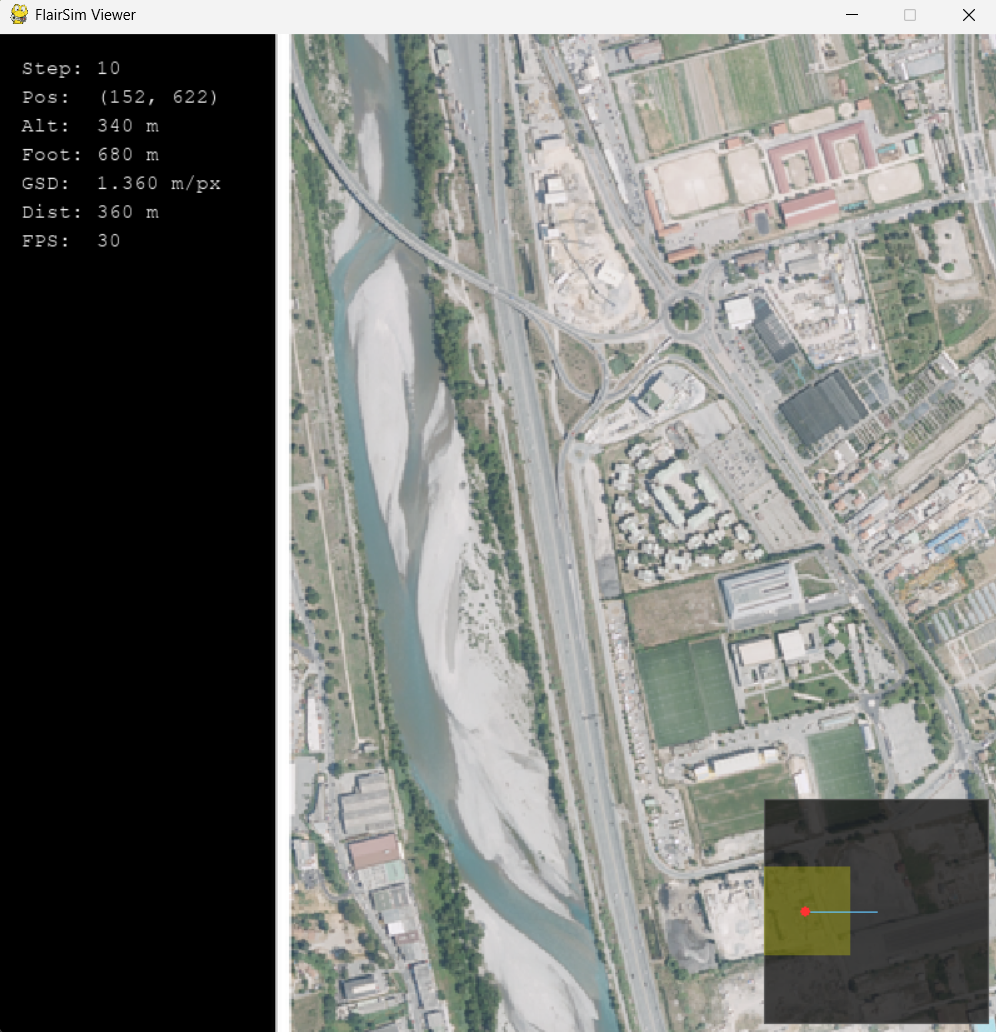

Testing VLM-guided navigation in FlairSim

FlairSim is an in-house drone simulator we built to test perception and navigation over FLAIR-HUB aerial imagery. It allows an external agent to receive the current observation, reason over the scene, and return actions through a simple control loop. In the current setup, a VLM is connected through Ollama and tasked with moving from a distant starting point toward a mission-defined target zone. The model can climb to gain context, identify the target area, move toward it, and descend to simulate a final approach. This work is still ongoing, and the next step is to refine prompts, compare model behavior more systematically, and evaluate more realistic search tasks.

Current status and next steps

This project is still ongoing. So far, the experiments show that VLMs can produce meaningful global descriptions of large aerial scenes and can already be integrated into a perception-decision-action loop in simulation. The next steps are to improve the navigation setup, test more mission prompts, and move from simple target zones toward more realistic object-search scenarios.