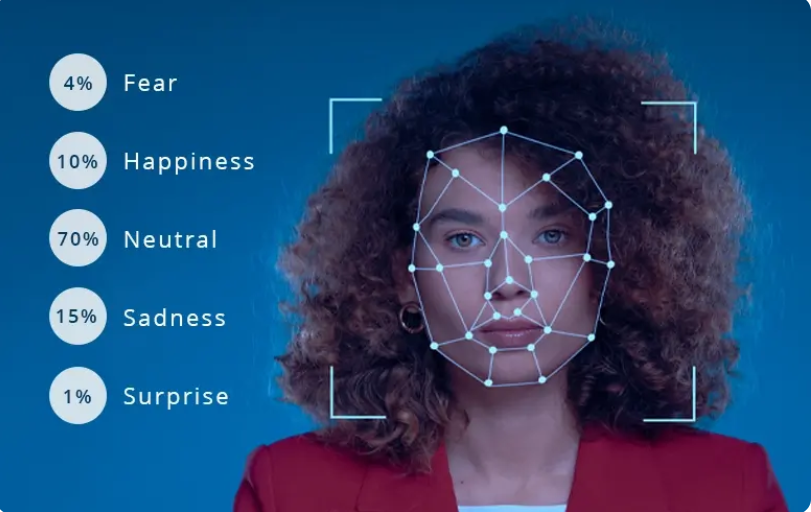

Facial Emotion Recognition with AI

A deep learning project exploring facial emotion recognition through CNN architecture comparison, data augmentation, and real-time webcam inference.

Visit repositoryFraming the problem

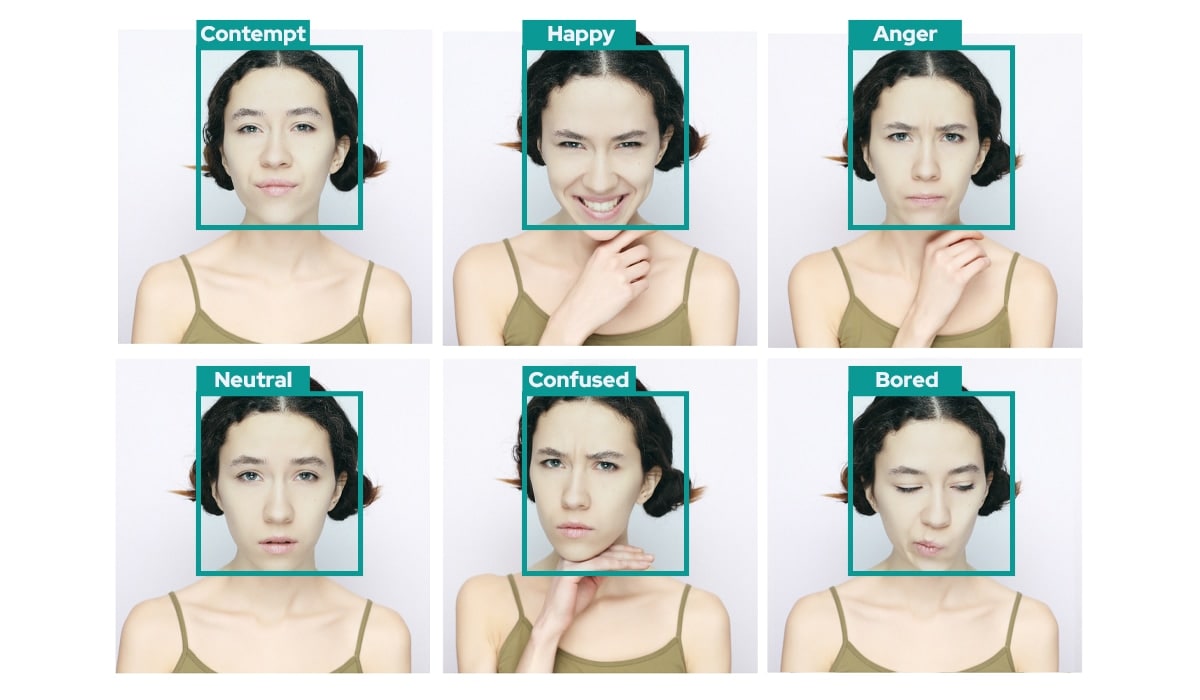

This project explored facial emotion recognition as a computer vision task, with the objective of classifying the seven basic emotions: neutral, happiness, sadness, fear, anger, disgust, and surprise.

Beyond simple image classification, the challenge was to build a system capable of extracting meaningful emotional cues from low-resolution facial data, despite class imbalance, visual ambiguity, and overlapping expressions.

Data Foundation

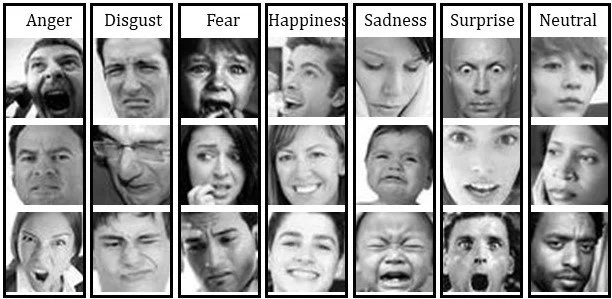

The project was built on FER2013, a public dataset containing roughly 35,900 grayscale facial images at 48×48 resolution, labeled across seven emotion categories.

Preparing this data correctly was essential: the images were normalized, organized into PyTorch-compatible pipelines, and carefully structured for both training and evaluation.

Architecture study

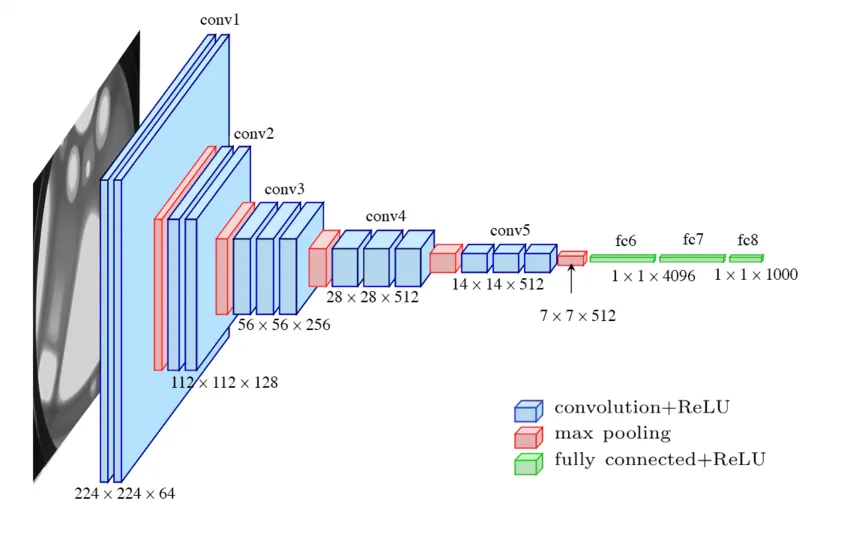

A key part of the project was comparing several convolutional architectures, including custom CNNs, VGG-style models, and ResNet-based approaches.

This comparison helped evaluate the trade-offs between model complexity, convergence stability, and generalization on compact grayscale facial inputs. ResNet ultimately emerged as the most reliable solution, offering the best balance between performance and robustness across the different emotion classes.

Training Strategy

The training pipeline was developed in PyTorch using Adam, batched loading, and repeated evaluation across epochs.

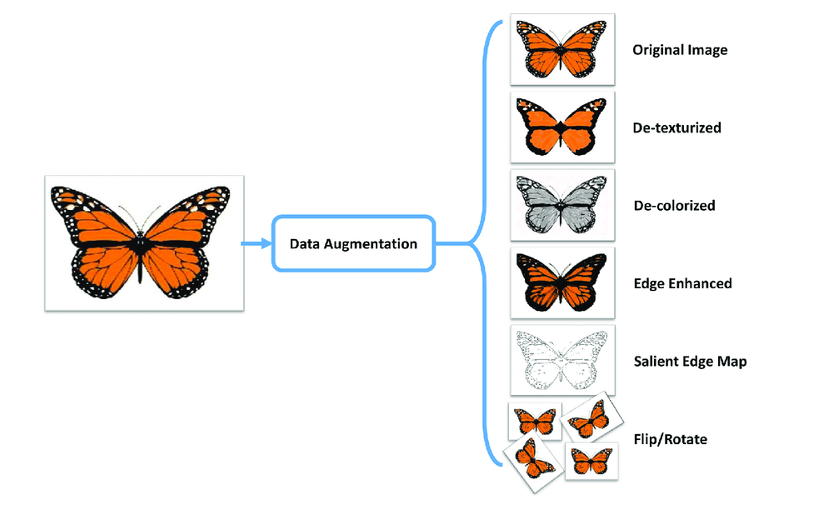

To improve generalization, we applied data augmentation techniques adapted to facial imagery, mainly rotations and flips, in order to enrich the dataset without changing the semantic meaning of the expressions.

Hyperparameter optimization

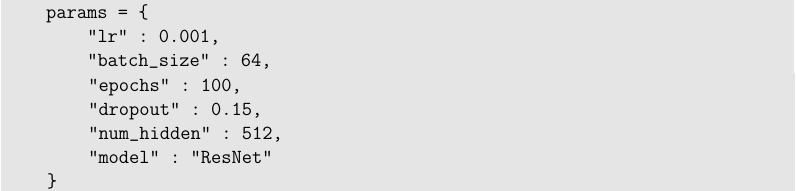

To move beyond manual trial and error, we used Optuna to explore stronger model configurations more efficiently.

The optimization process covered learning rate, batch size, dropout, hidden dimensions, number of epochs, and architecture choice.

Reading model behavior

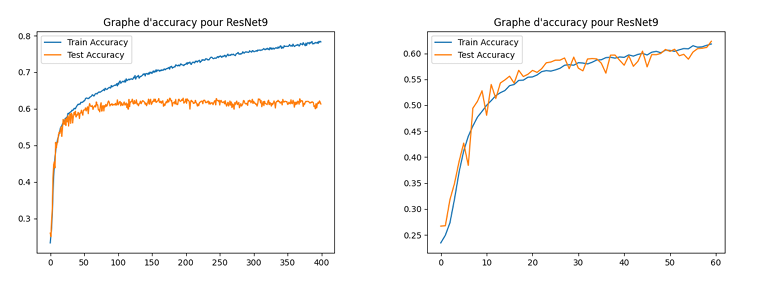

Rather than focusing only on final accuracy, we also analyzed how the models behaved during training and evaluation. Confusion matrices, validation curves, and failed runs revealed recurring weaknesses, especially between visually similar emotions such as sadness and neutral, or fear and anger.

The strongest ResNet-based configuration reached about 60% accuracy, while also making clear how much facial emotion recognition depends on both architecture design and the inherent ambiguity of the data itself.

Project Outcome

This project resulted in a full facial emotion recognition pipeline combining computer vision research, deep learning model design, hyperparameter optimization, and real-time inference.

More importantly, it gave us practical experience with PyTorch training workflows, architecture benchmarking, data augmentation, confusion-matrix analysis, and the challenges of translating model performance into usable interactive systems.